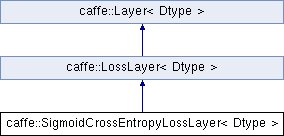

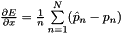

Computes the cross-entropy (logistic) loss ![$ E = \frac{-1}{n} \sum\limits_{n=1}^N \left[ p_n \log \hat{p}_n + (1 - p_n) \log(1 - \hat{p}_n) \right] $](form_163.png) , often used for predicting targets interpreted as probabilities.

More...

, often used for predicting targets interpreted as probabilities.

More...

#include <sigmoid_cross_entropy_loss_layer.hpp>

Public Member Functions | |

| SigmoidCrossEntropyLossLayer (const LayerParameter ¶m) | |

| virtual void | LayerSetUp (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

| Does layer-specific setup: your layer should implement this function as well as Reshape. More... | |

| virtual void | Reshape (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

| Adjust the shapes of top blobs and internal buffers to accommodate the shapes of the bottom blobs. More... | |

| virtual const char * | type () const |

| Returns the layer type. | |

Public Member Functions inherited from caffe::LossLayer< Dtype > Public Member Functions inherited from caffe::LossLayer< Dtype > | |

| LossLayer (const LayerParameter ¶m) | |

| virtual int | ExactNumBottomBlobs () const |

| Returns the exact number of bottom blobs required by the layer, or -1 if no exact number is required. More... | |

| virtual bool | AutoTopBlobs () const |

| For convenience and backwards compatibility, instruct the Net to automatically allocate a single top Blob for LossLayers, into which they output their singleton loss, (even if the user didn't specify one in the prototxt, etc.). | |

| virtual int | ExactNumTopBlobs () const |

| Returns the exact number of top blobs required by the layer, or -1 if no exact number is required. More... | |

| virtual bool | AllowForceBackward (const int bottom_index) const |

Public Member Functions inherited from caffe::Layer< Dtype > Public Member Functions inherited from caffe::Layer< Dtype > | |

| Layer (const LayerParameter ¶m) | |

| void | SetUp (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

| Implements common layer setup functionality. More... | |

| Dtype | Forward (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

| Given the bottom blobs, compute the top blobs and the loss. More... | |

| void | Backward (const vector< Blob< Dtype > *> &top, const vector< bool > &propagate_down, const vector< Blob< Dtype > *> &bottom) |

| Given the top blob error gradients, compute the bottom blob error gradients. More... | |

| vector< shared_ptr< Blob< Dtype > > > & | blobs () |

| Returns the vector of learnable parameter blobs. | |

| const LayerParameter & | layer_param () const |

| Returns the layer parameter. | |

| virtual void | ToProto (LayerParameter *param, bool write_diff=false) |

| Writes the layer parameter to a protocol buffer. | |

| Dtype | loss (const int top_index) const |

| Returns the scalar loss associated with a top blob at a given index. | |

| void | set_loss (const int top_index, const Dtype value) |

| Sets the loss associated with a top blob at a given index. | |

| virtual int | MinBottomBlobs () const |

| Returns the minimum number of bottom blobs required by the layer, or -1 if no minimum number is required. More... | |

| virtual int | MaxBottomBlobs () const |

| Returns the maximum number of bottom blobs required by the layer, or -1 if no maximum number is required. More... | |

| virtual int | MinTopBlobs () const |

| Returns the minimum number of top blobs required by the layer, or -1 if no minimum number is required. More... | |

| virtual int | MaxTopBlobs () const |

| Returns the maximum number of top blobs required by the layer, or -1 if no maximum number is required. More... | |

| virtual bool | EqualNumBottomTopBlobs () const |

| Returns true if the layer requires an equal number of bottom and top blobs. More... | |

| bool | param_propagate_down (const int param_id) |

| Specifies whether the layer should compute gradients w.r.t. a parameter at a particular index given by param_id. More... | |

| void | set_param_propagate_down (const int param_id, const bool value) |

| Sets whether the layer should compute gradients w.r.t. a parameter at a particular index given by param_id. | |

Protected Member Functions | |

| virtual void | Forward_cpu (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

Computes the cross-entropy (logistic) loss ![$ E = \frac{-1}{n} \sum\limits_{n=1}^N \left[ p_n \log \hat{p}_n + (1 - p_n) \log(1 - \hat{p}_n) \right] $](form_163.png) , often used for predicting targets interpreted as probabilities. More... , often used for predicting targets interpreted as probabilities. More... | |

| virtual void | Forward_gpu (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

| Using the GPU device, compute the layer output. Fall back to Forward_cpu() if unavailable. | |

| virtual void | Backward_cpu (const vector< Blob< Dtype > *> &top, const vector< bool > &propagate_down, const vector< Blob< Dtype > *> &bottom) |

| Computes the sigmoid cross-entropy loss error gradient w.r.t. the predictions. More... | |

| virtual void | Backward_gpu (const vector< Blob< Dtype > *> &top, const vector< bool > &propagate_down, const vector< Blob< Dtype > *> &bottom) |

| Using the GPU device, compute the gradients for any parameters and for the bottom blobs if propagate_down is true. Fall back to Backward_cpu() if unavailable. | |

| virtual Dtype | get_normalizer (LossParameter_NormalizationMode normalization_mode, int valid_count) |

Protected Member Functions inherited from caffe::Layer< Dtype > Protected Member Functions inherited from caffe::Layer< Dtype > | |

| virtual void | CheckBlobCounts (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

| void | SetLossWeights (const vector< Blob< Dtype > *> &top) |

Protected Attributes | |

| shared_ptr< SigmoidLayer< Dtype > > | sigmoid_layer_ |

| The internal SigmoidLayer used to map predictions to probabilities. | |

| shared_ptr< Blob< Dtype > > | sigmoid_output_ |

| sigmoid_output stores the output of the SigmoidLayer. | |

| vector< Blob< Dtype > * > | sigmoid_bottom_vec_ |

| bottom vector holder to call the underlying SigmoidLayer::Forward | |

| vector< Blob< Dtype > * > | sigmoid_top_vec_ |

| top vector holder to call the underlying SigmoidLayer::Forward | |

| bool | has_ignore_label_ |

| Whether to ignore instances with a certain label. | |

| int | ignore_label_ |

| The label indicating that an instance should be ignored. | |

| LossParameter_NormalizationMode | normalization_ |

| How to normalize the loss. | |

| Dtype | normalizer_ |

| int | outer_num_ |

| int | inner_num_ |

Protected Attributes inherited from caffe::Layer< Dtype > Protected Attributes inherited from caffe::Layer< Dtype > | |

| LayerParameter | layer_param_ |

| Phase | phase_ |

| vector< shared_ptr< Blob< Dtype > > > | blobs_ |

| vector< bool > | param_propagate_down_ |

| vector< Dtype > | loss_ |

Detailed Description

template<typename Dtype>

class caffe::SigmoidCrossEntropyLossLayer< Dtype >

Computes the cross-entropy (logistic) loss ![$ E = \frac{-1}{n} \sum\limits_{n=1}^N \left[ p_n \log \hat{p}_n + (1 - p_n) \log(1 - \hat{p}_n) \right] $](form_163.png) , often used for predicting targets interpreted as probabilities.

, often used for predicting targets interpreted as probabilities.

This layer is implemented rather than separate SigmoidLayer + CrossEntropyLayer as its gradient computation is more numerically stable. At test time, this layer can be replaced simply by a SigmoidLayer.

- Parameters

-

bottom input Blob vector (length 2)  the scores

the scores ![$ x \in [-\infty, +\infty]$](form_164.png) , which this layer maps to probability predictions

, which this layer maps to probability predictions ![$ \hat{p}_n = \sigma(x_n) \in [0, 1] $](form_165.png) using the sigmoid function

using the sigmoid function  (see SigmoidLayer).

(see SigmoidLayer). the targets

the targets ![$ y \in [0, 1] $](form_167.png)

top output Blob vector (length 1)  the computed cross-entropy loss:

the computed cross-entropy loss: ![$ E = \frac{-1}{n} \sum\limits_{n=1}^N \left[ p_n \log \hat{p}_n + (1 - p_n) \log(1 - \hat{p}_n) \right] $](form_163.png)

Member Function Documentation

◆ Backward_cpu()

|

protectedvirtual |

Computes the sigmoid cross-entropy loss error gradient w.r.t. the predictions.

Gradients cannot be computed with respect to the target inputs (bottom[1]), so this method ignores bottom[1] and requires !propagate_down[1], crashing if propagate_down[1] is set.

- Parameters

-

top output Blob vector (length 1), providing the error gradient with respect to the outputs propagate_down see Layer::Backward. propagate_down[1] must be false as gradient computation with respect to the targets is not implemented. bottom input Blob vector (length 2)  the predictions

the predictions  ; Backward computes diff

; Backward computes diff

the labels – ignored as we can't compute their error gradients

the labels – ignored as we can't compute their error gradients

Implements caffe::Layer< Dtype >.

◆ Forward_cpu()

|

protectedvirtual |

Computes the cross-entropy (logistic) loss ![$ E = \frac{-1}{n} \sum\limits_{n=1}^N \left[ p_n \log \hat{p}_n + (1 - p_n) \log(1 - \hat{p}_n) \right] $](form_163.png) , often used for predicting targets interpreted as probabilities.

, often used for predicting targets interpreted as probabilities.

This layer is implemented rather than separate SigmoidLayer + CrossEntropyLayer as its gradient computation is more numerically stable. At test time, this layer can be replaced simply by a SigmoidLayer.

- Parameters

-

bottom input Blob vector (length 2)  the scores

the scores ![$ x \in [-\infty, +\infty]$](form_164.png) , which this layer maps to probability predictions

, which this layer maps to probability predictions ![$ \hat{p}_n = \sigma(x_n) \in [0, 1] $](form_165.png) using the sigmoid function

using the sigmoid function  (see SigmoidLayer).

(see SigmoidLayer). the targets

the targets ![$ y \in [0, 1] $](form_167.png)

top output Blob vector (length 1)  the computed cross-entropy loss:

the computed cross-entropy loss: ![$ E = \frac{-1}{n} \sum\limits_{n=1}^N \left[ p_n \log \hat{p}_n + (1 - p_n) \log(1 - \hat{p}_n) \right] $](form_163.png)

Implements caffe::Layer< Dtype >.

◆ get_normalizer()

|

protectedvirtual |

Read the normalization mode parameter and compute the normalizer based on the blob size. If normalization_mode is VALID, the count of valid outputs will be read from valid_count, unless it is -1 in which case all outputs are assumed to be valid.

◆ LayerSetUp()

|

virtual |

Does layer-specific setup: your layer should implement this function as well as Reshape.

- Parameters

-

bottom the preshaped input blobs, whose data fields store the input data for this layer top the allocated but unshaped output blobs

This method should do one-time layer specific setup. This includes reading and processing relevent parameters from the layer_param_. Setting up the shapes of top blobs and internal buffers should be done in Reshape, which will be called before the forward pass to adjust the top blob sizes.

Reimplemented from caffe::LossLayer< Dtype >.

◆ Reshape()

|

virtual |

Adjust the shapes of top blobs and internal buffers to accommodate the shapes of the bottom blobs.

- Parameters

-

bottom the input blobs, with the requested input shapes top the top blobs, which should be reshaped as needed

This method should reshape top blobs as needed according to the shapes of the bottom (input) blobs, as well as reshaping any internal buffers and making any other necessary adjustments so that the layer can accommodate the bottom blobs.

Reimplemented from caffe::LossLayer< Dtype >.

The documentation for this class was generated from the following files:

- include/caffe/layers/sigmoid_cross_entropy_loss_layer.hpp

- src/caffe/layers/sigmoid_cross_entropy_loss_layer.cpp

, as

, as  in the overall

in the overall  ; hence

; hence  . (*Assuming that this top

. (*Assuming that this top  1.8.13

1.8.13