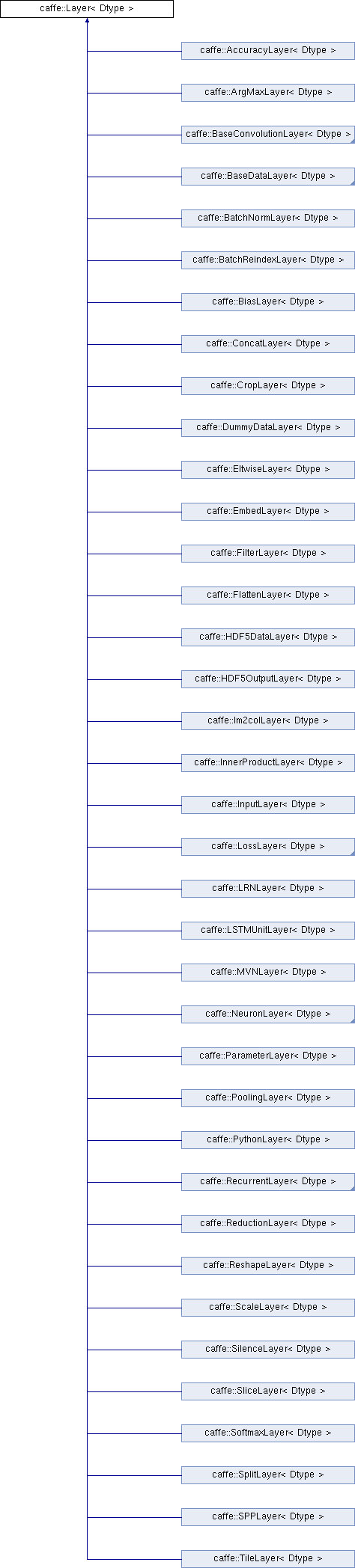

An interface for the units of computation which can be composed into a Net. More...

#include <layer.hpp>

Public Member Functions | |

| Layer (const LayerParameter ¶m) | |

| void | SetUp (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

| Implements common layer setup functionality. More... | |

| virtual void | LayerSetUp (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

| Does layer-specific setup: your layer should implement this function as well as Reshape. More... | |

| virtual void | Reshape (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top)=0 |

| Adjust the shapes of top blobs and internal buffers to accommodate the shapes of the bottom blobs. More... | |

| Dtype | Forward (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

| Given the bottom blobs, compute the top blobs and the loss. More... | |

| void | Backward (const vector< Blob< Dtype > *> &top, const vector< bool > &propagate_down, const vector< Blob< Dtype > *> &bottom) |

| Given the top blob error gradients, compute the bottom blob error gradients. More... | |

| vector< shared_ptr< Blob< Dtype > > > & | blobs () |

| Returns the vector of learnable parameter blobs. | |

| const LayerParameter & | layer_param () const |

| Returns the layer parameter. | |

| virtual void | ToProto (LayerParameter *param, bool write_diff=false) |

| Writes the layer parameter to a protocol buffer. | |

| Dtype | loss (const int top_index) const |

| Returns the scalar loss associated with a top blob at a given index. | |

| void | set_loss (const int top_index, const Dtype value) |

| Sets the loss associated with a top blob at a given index. | |

| virtual const char * | type () const |

| Returns the layer type. | |

| virtual int | ExactNumBottomBlobs () const |

| Returns the exact number of bottom blobs required by the layer, or -1 if no exact number is required. More... | |

| virtual int | MinBottomBlobs () const |

| Returns the minimum number of bottom blobs required by the layer, or -1 if no minimum number is required. More... | |

| virtual int | MaxBottomBlobs () const |

| Returns the maximum number of bottom blobs required by the layer, or -1 if no maximum number is required. More... | |

| virtual int | ExactNumTopBlobs () const |

| Returns the exact number of top blobs required by the layer, or -1 if no exact number is required. More... | |

| virtual int | MinTopBlobs () const |

| Returns the minimum number of top blobs required by the layer, or -1 if no minimum number is required. More... | |

| virtual int | MaxTopBlobs () const |

| Returns the maximum number of top blobs required by the layer, or -1 if no maximum number is required. More... | |

| virtual bool | EqualNumBottomTopBlobs () const |

| Returns true if the layer requires an equal number of bottom and top blobs. More... | |

| virtual bool | AutoTopBlobs () const |

| Return whether "anonymous" top blobs are created automatically by the layer. More... | |

| virtual bool | AllowForceBackward (const int bottom_index) const |

| Return whether to allow force_backward for a given bottom blob index. More... | |

| bool | param_propagate_down (const int param_id) |

| Specifies whether the layer should compute gradients w.r.t. a parameter at a particular index given by param_id. More... | |

| void | set_param_propagate_down (const int param_id, const bool value) |

| Sets whether the layer should compute gradients w.r.t. a parameter at a particular index given by param_id. | |

Protected Member Functions | |

| virtual void | Forward_cpu (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top)=0 |

| Using the CPU device, compute the layer output. | |

| virtual void | Forward_gpu (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

| Using the GPU device, compute the layer output. Fall back to Forward_cpu() if unavailable. | |

| virtual void | Backward_cpu (const vector< Blob< Dtype > *> &top, const vector< bool > &propagate_down, const vector< Blob< Dtype > *> &bottom)=0 |

| Using the CPU device, compute the gradients for any parameters and for the bottom blobs if propagate_down is true. | |

| virtual void | Backward_gpu (const vector< Blob< Dtype > *> &top, const vector< bool > &propagate_down, const vector< Blob< Dtype > *> &bottom) |

| Using the GPU device, compute the gradients for any parameters and for the bottom blobs if propagate_down is true. Fall back to Backward_cpu() if unavailable. | |

| virtual void | CheckBlobCounts (const vector< Blob< Dtype > *> &bottom, const vector< Blob< Dtype > *> &top) |

| void | SetLossWeights (const vector< Blob< Dtype > *> &top) |

Protected Attributes | |

| LayerParameter | layer_param_ |

| Phase | phase_ |

| vector< shared_ptr< Blob< Dtype > > > | blobs_ |

| vector< bool > | param_propagate_down_ |

| vector< Dtype > | loss_ |

Detailed Description

template<typename Dtype>

class caffe::Layer< Dtype >

An interface for the units of computation which can be composed into a Net.

Layers must implement a Forward function, in which they take their input (bottom) Blobs (if any) and compute their output Blobs (if any). They may also implement a Backward function, in which they compute the error gradients with respect to their input Blobs, given the error gradients with their output Blobs.

Constructor & Destructor Documentation

◆ Layer()

|

inlineexplicit |

You should not implement your own constructor. Any set up code should go to SetUp(), where the dimensions of the bottom blobs are provided to the layer.

Member Function Documentation

◆ AllowForceBackward()

|

inlinevirtual |

Return whether to allow force_backward for a given bottom blob index.

If AllowForceBackward(i) == false, we will ignore the force_backward setting and backpropagate to blob i only if it needs gradient information (as is done when force_backward == false).

Reimplemented in caffe::LSTMUnitLayer< Dtype >, caffe::RecurrentLayer< Dtype >, caffe::EuclideanLossLayer< Dtype >, caffe::ContrastiveLossLayer< Dtype >, and caffe::LossLayer< Dtype >.

◆ AutoTopBlobs()

|

inlinevirtual |

Return whether "anonymous" top blobs are created automatically by the layer.

If this method returns true, Net::Init will create enough "anonymous" top blobs to fulfill the requirement specified by ExactNumTopBlobs() or MinTopBlobs().

Reimplemented in caffe::LossLayer< Dtype >.

◆ Backward()

|

inline |

Given the top blob error gradients, compute the bottom blob error gradients.

- Parameters

-

top the output blobs, whose diff fields store the gradient of the error with respect to themselves propagate_down a vector with equal length to bottom, with each index indicating whether to propagate the error gradients down to the bottom blob at the corresponding index bottom the input blobs, whose diff fields will store the gradient of the error with respect to themselves after Backward is run

The Backward wrapper calls the relevant device wrapper function (Backward_cpu or Backward_gpu) to compute the bottom blob diffs given the top blob diffs.

Your layer should implement Backward_cpu and (optionally) Backward_gpu.

◆ CheckBlobCounts()

|

inlineprotectedvirtual |

Called by the parent Layer's SetUp to check that the number of bottom and top Blobs provided as input match the expected numbers specified by the {ExactNum,Min,Max}{Bottom,Top}Blobs() functions.

◆ EqualNumBottomTopBlobs()

|

inlinevirtual |

Returns true if the layer requires an equal number of bottom and top blobs.

This method should be overridden to return true if your layer expects an equal number of bottom and top blobs.

Reimplemented in caffe::BaseConvolutionLayer< Dtype >.

◆ ExactNumBottomBlobs()

|

inlinevirtual |

Returns the exact number of bottom blobs required by the layer, or -1 if no exact number is required.

This method should be overridden to return a non-negative value if your layer expects some exact number of bottom blobs.

Reimplemented in caffe::LSTMUnitLayer< Dtype >, caffe::InfogainLossLayer< Dtype >, caffe::BatchNormLayer< Dtype >, caffe::ArgMaxLayer< Dtype >, caffe::ContrastiveLossLayer< Dtype >, caffe::AccuracyLayer< Dtype >, caffe::HDF5OutputLayer< Dtype >, caffe::HDF5DataLayer< Dtype >, caffe::WindowDataLayer< Dtype >, caffe::AbsValLayer< Dtype >, caffe::LRNLayer< Dtype >, caffe::ImageDataLayer< Dtype >, caffe::LossLayer< Dtype >, caffe::CropLayer< Dtype >, caffe::FlattenLayer< Dtype >, caffe::DummyDataLayer< Dtype >, caffe::EmbedLayer< Dtype >, caffe::Im2colLayer< Dtype >, caffe::InputLayer< Dtype >, caffe::ReductionLayer< Dtype >, caffe::BatchReindexLayer< Dtype >, caffe::InnerProductLayer< Dtype >, caffe::ParameterLayer< Dtype >, caffe::ReshapeLayer< Dtype >, caffe::SliceLayer< Dtype >, caffe::SPPLayer< Dtype >, caffe::MemoryDataLayer< Dtype >, caffe::PoolingLayer< Dtype >, caffe::SplitLayer< Dtype >, caffe::MVNLayer< Dtype >, caffe::NeuronLayer< Dtype >, caffe::SoftmaxLayer< Dtype >, caffe::DataLayer< Dtype >, and caffe::TileLayer< Dtype >.

◆ ExactNumTopBlobs()

|

inlinevirtual |

Returns the exact number of top blobs required by the layer, or -1 if no exact number is required.

This method should be overridden to return a non-negative value if your layer expects some exact number of top blobs.

Reimplemented in caffe::LSTMUnitLayer< Dtype >, caffe::InfogainLossLayer< Dtype >, caffe::SoftmaxWithLossLayer< Dtype >, caffe::BatchNormLayer< Dtype >, caffe::ArgMaxLayer< Dtype >, caffe::RecurrentLayer< Dtype >, caffe::LossLayer< Dtype >, caffe::ScaleLayer< Dtype >, caffe::HDF5OutputLayer< Dtype >, caffe::WindowDataLayer< Dtype >, caffe::AbsValLayer< Dtype >, caffe::BiasLayer< Dtype >, caffe::LRNLayer< Dtype >, caffe::ImageDataLayer< Dtype >, caffe::CropLayer< Dtype >, caffe::FlattenLayer< Dtype >, caffe::EmbedLayer< Dtype >, caffe::Im2colLayer< Dtype >, caffe::ReductionLayer< Dtype >, caffe::BatchReindexLayer< Dtype >, caffe::EltwiseLayer< Dtype >, caffe::InnerProductLayer< Dtype >, caffe::ParameterLayer< Dtype >, caffe::ReshapeLayer< Dtype >, caffe::SPPLayer< Dtype >, caffe::MemoryDataLayer< Dtype >, caffe::ConcatLayer< Dtype >, caffe::MVNLayer< Dtype >, caffe::NeuronLayer< Dtype >, caffe::SoftmaxLayer< Dtype >, caffe::SilenceLayer< Dtype >, and caffe::TileLayer< Dtype >.

◆ Forward()

|

inline |

Given the bottom blobs, compute the top blobs and the loss.

- Parameters

-

bottom the input blobs, whose data fields store the input data for this layer top the preshaped output blobs, whose data fields will store this layers' outputs

- Returns

- The total loss from the layer.

The Forward wrapper calls the relevant device wrapper function (Forward_cpu or Forward_gpu) to compute the top blob values given the bottom blobs. If the layer has any non-zero loss_weights, the wrapper then computes and returns the loss.

Your layer should implement Forward_cpu and (optionally) Forward_gpu.

◆ LayerSetUp()

|

inlinevirtual |

Does layer-specific setup: your layer should implement this function as well as Reshape.

- Parameters

-

bottom the preshaped input blobs, whose data fields store the input data for this layer top the allocated but unshaped output blobs

This method should do one-time layer specific setup. This includes reading and processing relevent parameters from the layer_param_. Setting up the shapes of top blobs and internal buffers should be done in Reshape, which will be called before the forward pass to adjust the top blob sizes.

Reimplemented in caffe::BasePrefetchingDataLayer< Dtype >, caffe::SoftmaxWithLossLayer< Dtype >, caffe::InfogainLossLayer< Dtype >, caffe::SigmoidCrossEntropyLossLayer< Dtype >, caffe::BatchNormLayer< Dtype >, caffe::ContrastiveLossLayer< Dtype >, caffe::ArgMaxLayer< Dtype >, caffe::DropoutLayer< Dtype >, caffe::PReLULayer< Dtype >, caffe::ExpLayer< Dtype >, caffe::LogLayer< Dtype >, caffe::AccuracyLayer< Dtype >, caffe::PowerLayer< Dtype >, caffe::RecurrentLayer< Dtype >, caffe::ScaleLayer< Dtype >, caffe::AbsValLayer< Dtype >, caffe::HDF5OutputLayer< Dtype >, caffe::ThresholdLayer< Dtype >, caffe::HDF5DataLayer< Dtype >, caffe::BaseDataLayer< Dtype >, caffe::LossLayer< Dtype >, caffe::LRNLayer< Dtype >, caffe::BiasLayer< Dtype >, caffe::CropLayer< Dtype >, caffe::EmbedLayer< Dtype >, caffe::Im2colLayer< Dtype >, caffe::ReductionLayer< Dtype >, caffe::DummyDataLayer< Dtype >, caffe::EltwiseLayer< Dtype >, caffe::FilterLayer< Dtype >, caffe::InnerProductLayer< Dtype >, caffe::InputLayer< Dtype >, caffe::ReshapeLayer< Dtype >, caffe::SliceLayer< Dtype >, caffe::SPPLayer< Dtype >, caffe::BaseConvolutionLayer< Dtype >, caffe::PoolingLayer< Dtype >, caffe::ConcatLayer< Dtype >, caffe::PythonLayer< Dtype >, and caffe::ParameterLayer< Dtype >.

◆ MaxBottomBlobs()

|

inlinevirtual |

Returns the maximum number of bottom blobs required by the layer, or -1 if no maximum number is required.

This method should be overridden to return a non-negative value if your layer expects some maximum number of bottom blobs.

Reimplemented in caffe::InfogainLossLayer< Dtype >, caffe::RecurrentLayer< Dtype >, caffe::ScaleLayer< Dtype >, and caffe::BiasLayer< Dtype >.

◆ MaxTopBlobs()

|

inlinevirtual |

Returns the maximum number of top blobs required by the layer, or -1 if no maximum number is required.

This method should be overridden to return a non-negative value if your layer expects some maximum number of top blobs.

Reimplemented in caffe::InfogainLossLayer< Dtype >, caffe::SoftmaxWithLossLayer< Dtype >, caffe::AccuracyLayer< Dtype >, caffe::PoolingLayer< Dtype >, and caffe::DataLayer< Dtype >.

◆ MinBottomBlobs()

|

inlinevirtual |

Returns the minimum number of bottom blobs required by the layer, or -1 if no minimum number is required.

This method should be overridden to return a non-negative value if your layer expects some minimum number of bottom blobs.

Reimplemented in caffe::InfogainLossLayer< Dtype >, caffe::RecurrentLayer< Dtype >, caffe::ScaleLayer< Dtype >, caffe::BiasLayer< Dtype >, caffe::EltwiseLayer< Dtype >, caffe::FilterLayer< Dtype >, caffe::BaseConvolutionLayer< Dtype >, caffe::ConcatLayer< Dtype >, and caffe::SilenceLayer< Dtype >.

◆ MinTopBlobs()

|

inlinevirtual |

Returns the minimum number of top blobs required by the layer, or -1 if no minimum number is required.

This method should be overridden to return a non-negative value if your layer expects some minimum number of top blobs.

Reimplemented in caffe::InfogainLossLayer< Dtype >, caffe::SoftmaxWithLossLayer< Dtype >, caffe::AccuracyLayer< Dtype >, caffe::HDF5DataLayer< Dtype >, caffe::DummyDataLayer< Dtype >, caffe::InputLayer< Dtype >, caffe::FilterLayer< Dtype >, caffe::SliceLayer< Dtype >, caffe::PoolingLayer< Dtype >, caffe::BaseConvolutionLayer< Dtype >, caffe::SplitLayer< Dtype >, and caffe::DataLayer< Dtype >.

◆ param_propagate_down()

|

inline |

Specifies whether the layer should compute gradients w.r.t. a parameter at a particular index given by param_id.

You can safely ignore false values and always compute gradients for all parameters, but possibly with wasteful computation.

◆ Reshape()

|

pure virtual |

Adjust the shapes of top blobs and internal buffers to accommodate the shapes of the bottom blobs.

- Parameters

-

bottom the input blobs, with the requested input shapes top the top blobs, which should be reshaped as needed

This method should reshape top blobs as needed according to the shapes of the bottom (input) blobs, as well as reshaping any internal buffers and making any other necessary adjustments so that the layer can accommodate the bottom blobs.

Implemented in caffe::LSTMUnitLayer< Dtype >, caffe::SoftmaxWithLossLayer< Dtype >, caffe::InfogainLossLayer< Dtype >, caffe::SigmoidCrossEntropyLossLayer< Dtype >, caffe::MultinomialLogisticLossLayer< Dtype >, caffe::BatchNormLayer< Dtype >, caffe::EuclideanLossLayer< Dtype >, caffe::ArgMaxLayer< Dtype >, caffe::PReLULayer< Dtype >, caffe::DropoutLayer< Dtype >, caffe::AccuracyLayer< Dtype >, caffe::BaseDataLayer< Dtype >, caffe::HDF5OutputLayer< Dtype >, caffe::PythonLayer< Dtype >, caffe::RecurrentLayer< Dtype >, caffe::ScaleLayer< Dtype >, caffe::HDF5DataLayer< Dtype >, caffe::LossLayer< Dtype >, caffe::LRNLayer< Dtype >, caffe::BiasLayer< Dtype >, caffe::CropLayer< Dtype >, caffe::FlattenLayer< Dtype >, caffe::DummyDataLayer< Dtype >, caffe::EmbedLayer< Dtype >, caffe::Im2colLayer< Dtype >, caffe::InputLayer< Dtype >, caffe::ParameterLayer< Dtype >, caffe::ReductionLayer< Dtype >, caffe::BatchReindexLayer< Dtype >, caffe::EltwiseLayer< Dtype >, caffe::FilterLayer< Dtype >, caffe::InnerProductLayer< Dtype >, caffe::ReshapeLayer< Dtype >, caffe::SliceLayer< Dtype >, caffe::SPPLayer< Dtype >, caffe::BaseConvolutionLayer< Dtype >, caffe::PoolingLayer< Dtype >, caffe::ConcatLayer< Dtype >, caffe::NeuronLayer< Dtype >, caffe::SplitLayer< Dtype >, caffe::MVNLayer< Dtype >, caffe::SoftmaxLayer< Dtype >, caffe::SilenceLayer< Dtype >, and caffe::TileLayer< Dtype >.

◆ SetLossWeights()

|

inlineprotected |

Called by SetUp to initialize the weights associated with any top blobs in the loss function. Store non-zero loss weights in the diff blob.

◆ SetUp()

|

inline |

Implements common layer setup functionality.

- Parameters

-

bottom the preshaped input blobs top the allocated but unshaped output blobs, to be shaped by Reshape

Checks that the number of bottom and top blobs is correct. Calls LayerSetUp to do special layer setup for individual layer types, followed by Reshape to set up sizes of top blobs and internal buffers. Sets up the loss weight multiplier blobs for any non-zero loss weights. This method may not be overridden.

Member Data Documentation

◆ blobs_

|

protected |

The vector that stores the learnable parameters as a set of blobs.

◆ layer_param_

|

protected |

The protobuf that stores the layer parameters

◆ loss_

|

protected |

The vector that indicates whether each top blob has a non-zero weight in the objective function.

◆ param_propagate_down_

|

protected |

Vector indicating whether to compute the diff of each param blob.

◆ phase_

|

protected |

The phase: TRAIN or TEST

The documentation for this class was generated from the following file:

- include/caffe/layer.hpp

1.8.13

1.8.13